Adytum

An experimental self-hosted AI agent swarm designed to be a digital companion. Engineered with hierarchical intelligence, cognitive vector memory, and a reactive event-driven backbone to automate complex digital workflows locally.

The Challenge

Mainstream AI agents operate in stateless, linear prompt loops, lacking the persistent memory and proactive autonomy required for a true digital digital assistant. Adytum was built to solve the tripartite challenge of local data sovereignty, long-term conceptual retrieval, and autonomous background execution through a decoupled, multi-tier swarm architecture.

The Solution

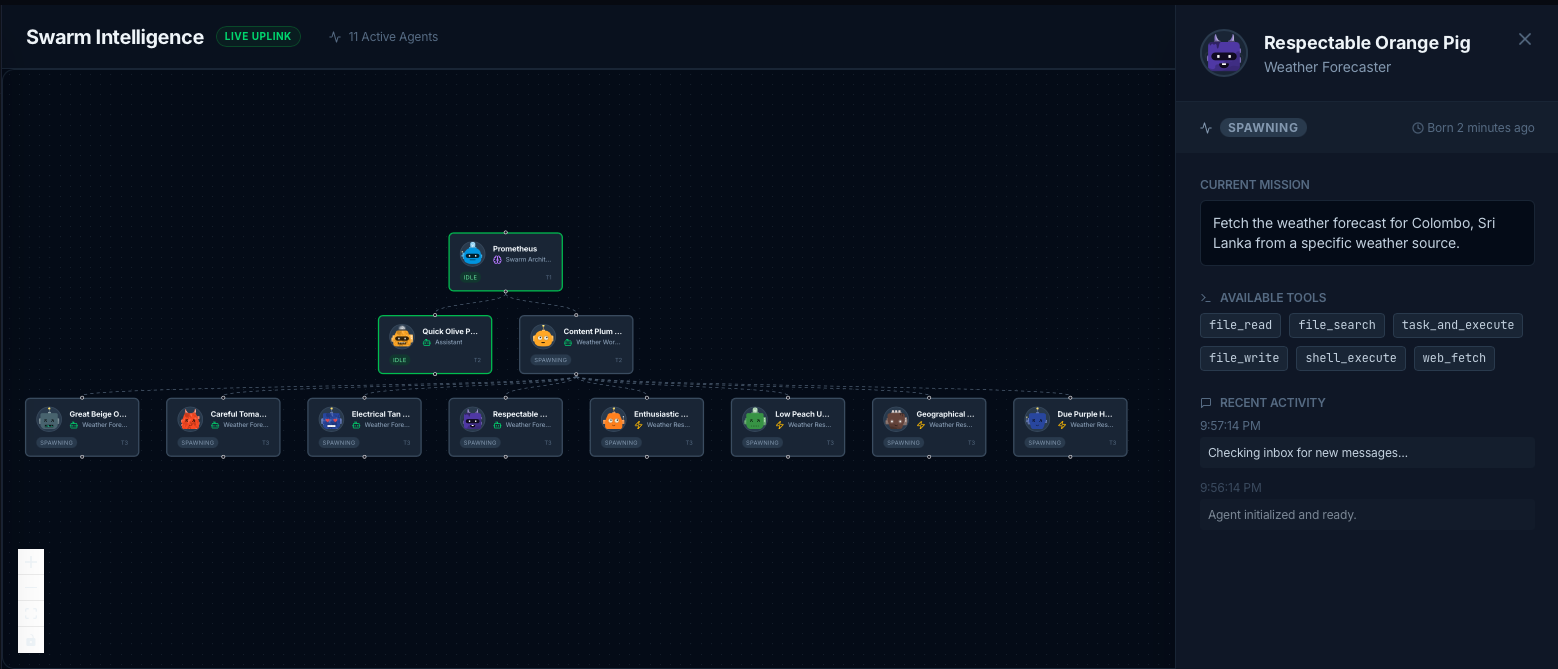

- ✦Hierarchical Swarm Orchestration: Implemented a T1/T2/T3 command structure to prevent recursion failure and enable massive parallel task delegation.

- ✦Cognitive Memory Architecture: Developed a dual-layer memory system using SQLite and FTS5 for episodic retention and conceptual graph mapping.

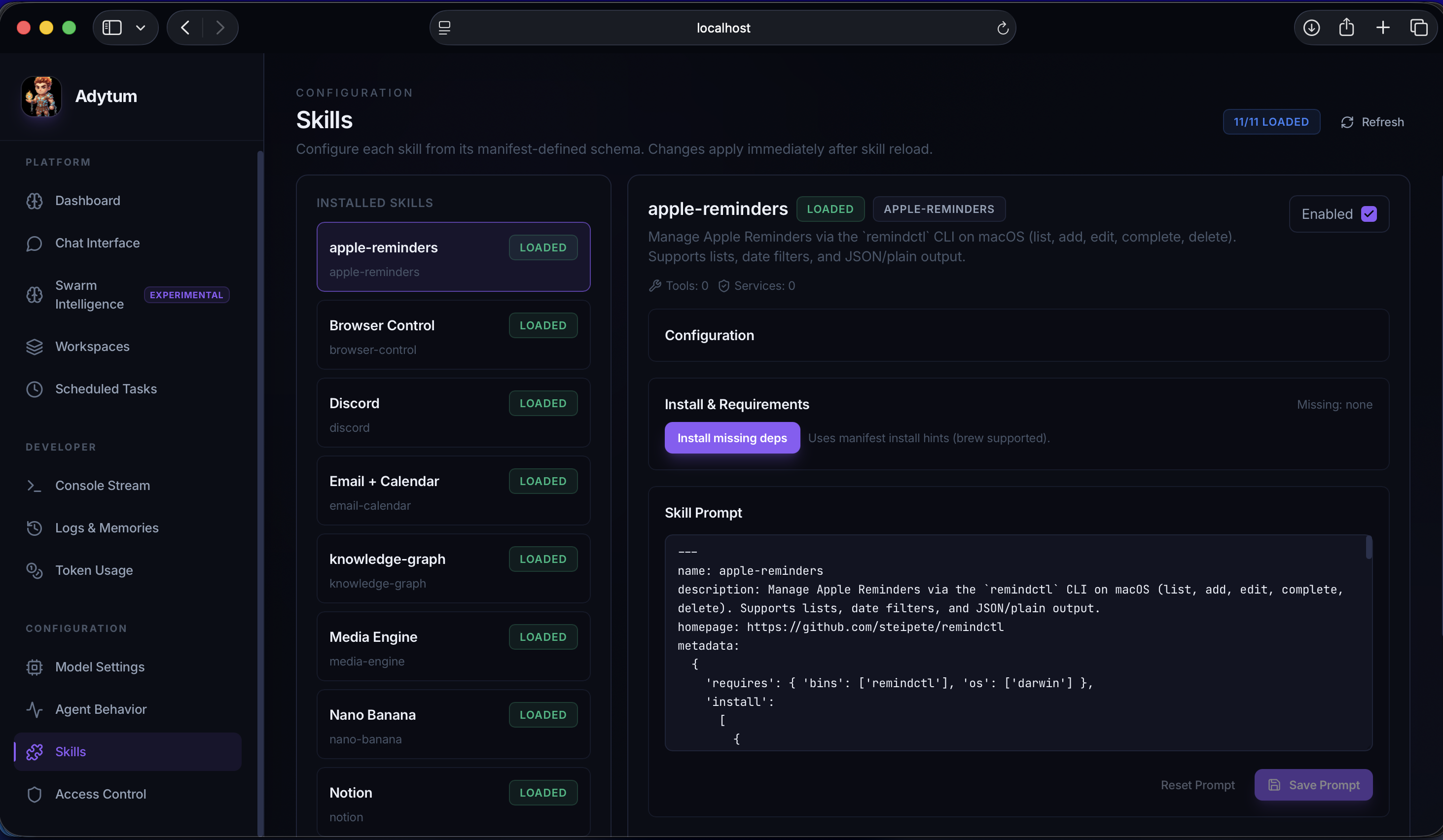

- ✦Skill-Based Plugin System: Built a hot-reloading architecture that allows the agent to dynamically acquire new tools and domain-specific instructions at runtime.

Tech Stack

🔬 Abstract: The Sovereignty of Local Intelligence

Adytum is a research-driven, self-hosted AI ecosystem designed to bridge the gap between simple LLM wrappers and truly autonomous digital companions. By moving the "brain" of the agent from the cloud to the user’s local hardware, Adytum explores a new frontier in Sovereign Intelligence.

The research focuses on three core pillars: Hierarchical Orchestration, Cognitive Memory Consolidation, and Proactive Autonomy. This paper details the architectural logic and algorithmic breakthroughs discovered during the development of the Adytum Gateway and its supporting swarm.

🏛️ Pillar 1: Hierarchical Swarm Orchestration

A single-agent ReAct loop is prone to "Infinite Loop Bias" when faced with high-complexity tasks. Adytum mitigates this through a deterministic, tiered chain of command.

1.1 The Tiered Command Structure

- Tier 1 (The Architect): Acts as the strategist. It maintains the user’s high-level goal and is programmatically prohibited from executing low-level tools. It must output a

delegationplan to Tier 2 entities. - Tier 2 (Managers): Workflow orchestrators. They receive high-level directives (e.g., "Research and summarize repo X") and spawn Tier 3 Workers in parallel batches.

- Tier 3 (Workers): Task-specific executors (e.g.,

file_read,shell_execute). Workers are ephemeral nodes that report back to their Manager.

1.2 Lifecycle Persistence: Cryostasis & The Graveyard

To manage compute resources locally, Adytum implements a lifecycle manager:

- Cryostasis: Inactive daemons are serialized into

cryostasis.json, freeing up RAM while preserving state. - The Graveyard: Reactive workers are moved to a historical log (

graveyard.json) upon task completion for future auditing and knowledge extraction.

💾 Pillar 2: Cognitive Memory & Knowledge Management

Memory in Adytum simulates the human hippocampal system, moving beyond simple context-window stuffing.

2.1 The Dual-Layer Persistence Paradigm

Adytum uses an SQLite-backed MemoryDB with two distinct operational tracks:

- Episodic Memory (Short-Term): Every message, tool call, and 'thought' is stored. A semantic search algorithm (FTS5 + KNN Embeddings) retrieves the most relevant past episodes based on current context.

- Semantic Knowledge Graph (Long-Term): Discrete facts (e.g., "User prefers Rust over Python") are extracted as entity-relationship nodes. These provide the agent with a grounded understanding of the user’s world map.

2.2 Autonomous Memory Categories

Memories are automatically classified into categories such as episodic_summary, user_fact, curiosity, and dream. This categorization allows for tiered retrieval—querying the "user_fact" table during a greeting, but searching "episodic_summary" during a code review.

🧩 Pillar 3: Extensible Skill System

Adytum is an evolving platform. It leverages a Plugin-Based Skill Architecture that decouples functional capability from core logic.

- Dynamic Discovery: The

SkillLoaderscans the workspace at runtime foradytum.plugin.jsonmanifests. - Hot-Reloading: Developers can update skills without restarting the Gateway. A filesystem watcher triggers a live refresh of the agent's system prompt and tool definitions.

- Dependency Guarding: Skills only activate if their specific system requirements (OS, binary dependencies, or API secrets) are met, ensuring runtime stability.

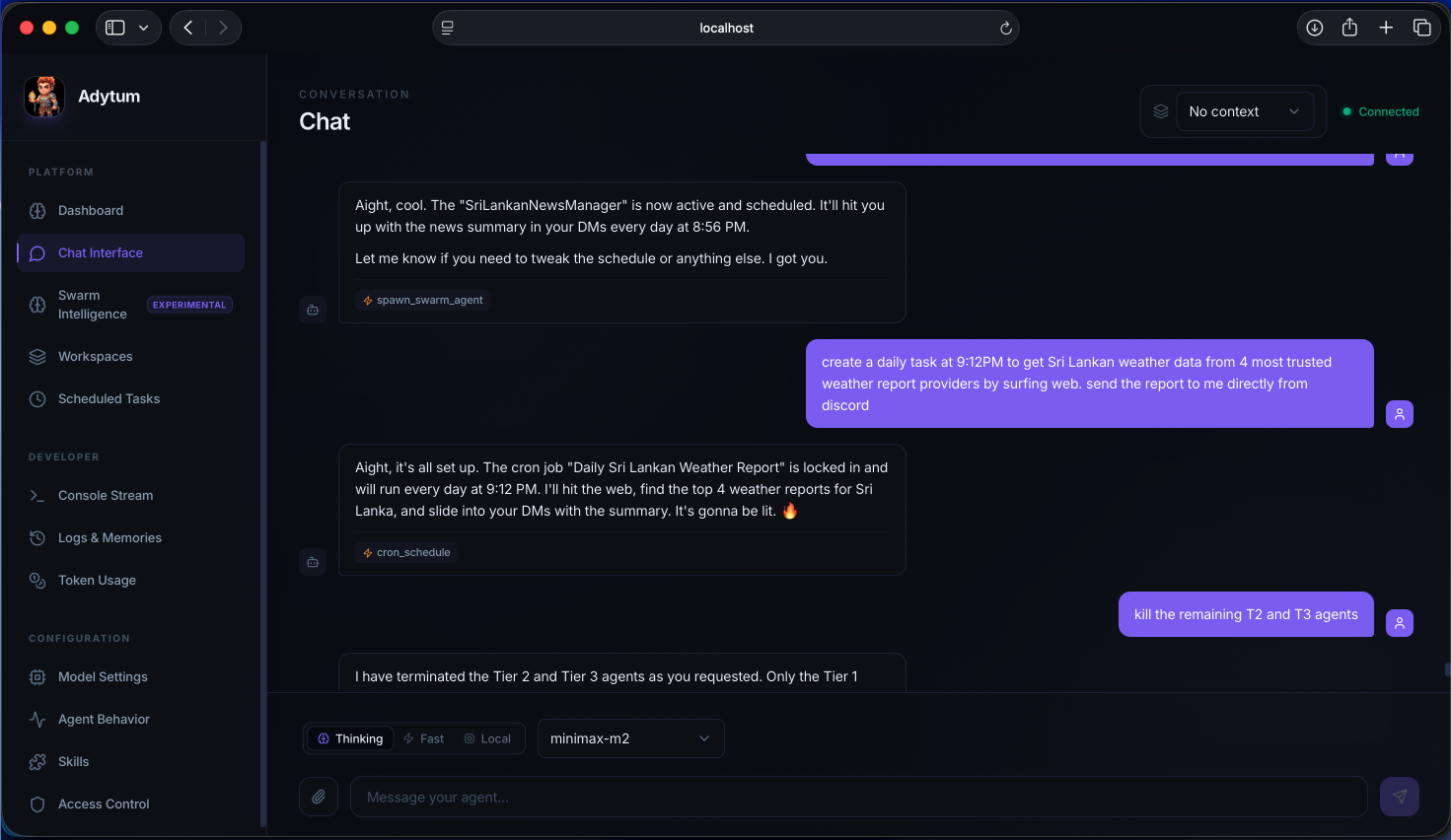

⚡ Pillar 4: Proactive Autonomy & Event Execution

Unlike passive chatbots, Adytum is designed to act without provocation through an internal event-driven backbone.

4.1 The Heartbeat & Dreamer Routines

- HeartbeatManager: Periodically checks the

workspace/HEARTBEAT.mdfile to evaluate progress against long-term goals. - The Dreamer Routine: During system idle periods, Adytum enters a "reflect" state. It scans recent logs, compacts verbose history into core memories, and may even evolve its own

SOUL.md(personality matrix) based on recent interactions.

4.2 Environmental Sensors

Background sensors act as nervous system inputs. For example, a file watcher can detect a git commit and trigger the Architect to proactively analyze the changes before the user even asks.

🎯 Conclusion: The Future of Agentic Computing

Adytum demonstrates that self-hosted, multi-agent systems can achieve a level of context-awareness and proactivity that passive cloud-based models cannot match. By treating an agent as a persistent entity with a lifecycle, memory, and specialized skills, we move closer to a future where AI is not just a tool we use, but a digital collaborator that grows with our workspace.

Technical Stack Overview:

- Backend: Node.js, Fastify, TypeScript, Tsyringe DI.

- Persistence: SQLite (MemoryDB), FTS5 Indexing, JSON-based storage for lifecycles.

- Frontend: Next.js 15, Socket.io (Real-time Swarm Metrics).

- Communication: Point-to-Point and Broadcast Swarm Messaging.

Interface Design

Visual High-Fidelity

Agent Swarm Visualization

Hierarchical Swarm Dynamics

Real-Time Interaction

Chat & Active Debugging

Skill Development

Dynamic Tool Management